Six Billion Dollars of Light

Why the next generation of AI cannot be built on the wires we have

I started writing these essays because I got tired of not understanding the news.

Every week the headlines told me Nvidia had made another investment, another acquisition, another partnership with a company I had never heard of. Marvell-Celestial. Coherent. Lumentum. Soitec. Names that meant nothing on first read and turned out, on the second or third, to mean something significant. I wanted to know which ones mattered. I started reading. Then I started writing, because writing is how I think.

The trilogy started with the chip. Then memory. This essay is the third, and the one that took the longest to write, because the answer turned out to be stranger than I expected.

The story the news has been telling is that Nvidia is buying optical companies. That is not the story.

What is actually happening is that the wires inside the most expensive infrastructure project in human history have stopped working — and the entire supply chain underneath the next generation of AI is being rebuilt around light, in real time, at a scale most observers have not yet noticed.

Here is what that cluster looks like.

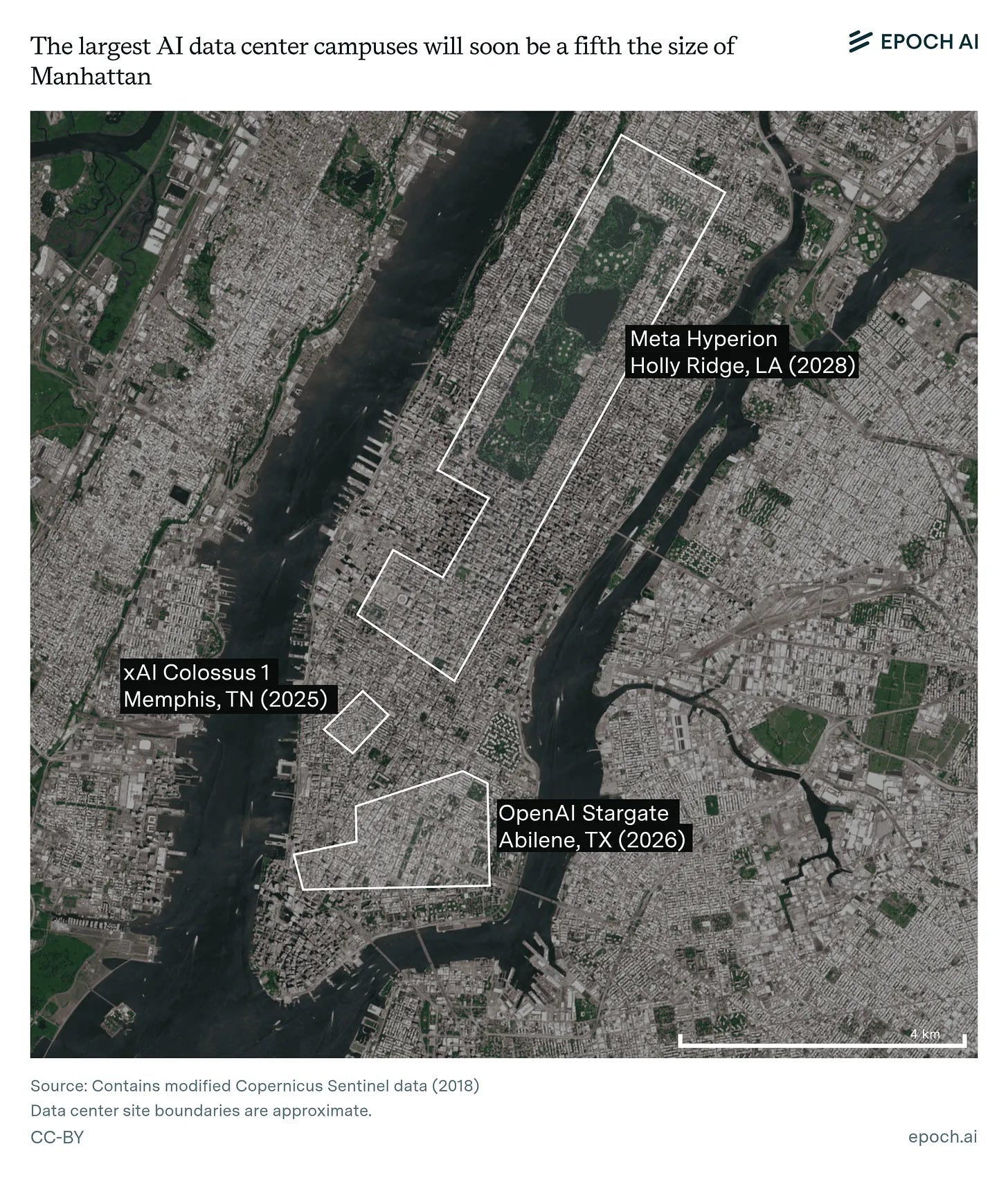

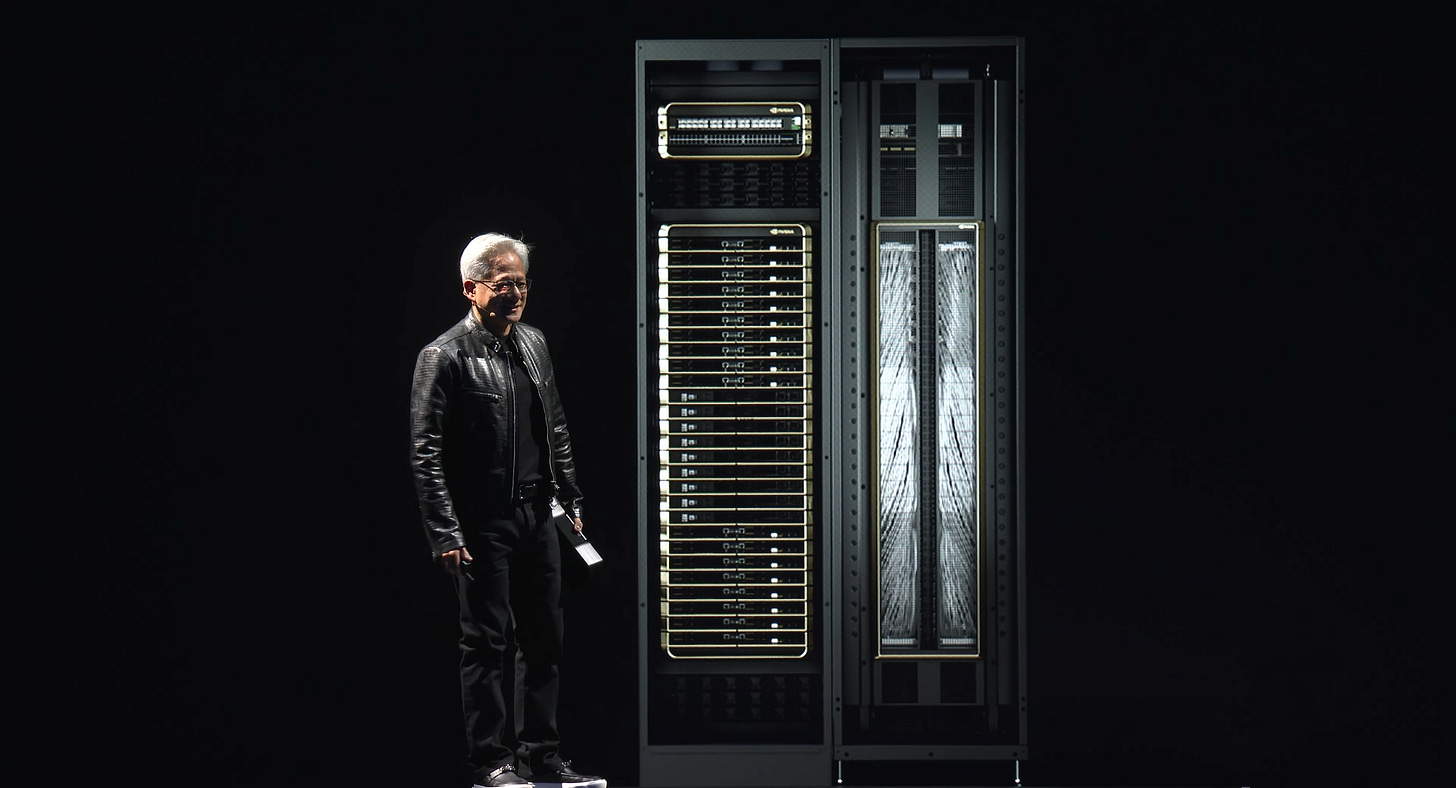

A 2027 frontier training run will use a million GPUs. It will draw 1.4 gigawatts of continuous power — enough to light a small city. It will occupy a building the size of three aircraft carriers placed end to end. Parts of that building are already being poured. The land is bought. The chips are being booked. The capital is committed.

And yet the building cannot be built.

At this scale, the wires inside it do not work.

The I/O Wall

The energy required to move data across a copper wire now exceeds the energy required to compute on the data once it arrives.

Read that sentence twice.

For sixty years of computing history, the chip was the hard part. Moving information between chips was an afterthought, a few millimeters of trace, a soldered connection, a wire. The wire was free. The chip was where the engineering happened.

That equation has now reversed.

A Blackwell rack today draws 1.4 megawatts. Inside it, GPUs sit centimeters apart, communicating at 224 gigabits per lane. At those speeds, a copper trace longer than about a meter loses signal integrity. The signal smears. Errors compound. The thermal load from all that pushing of electrons through resistance becomes its own engineering problem on top of the GPU’s own thermal envelope.

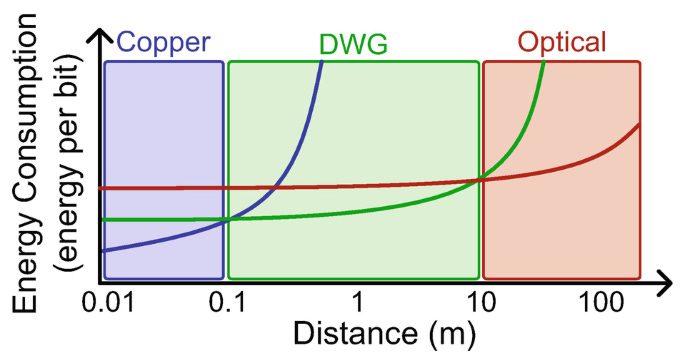

The numbers are blunt. A modern GPU spends roughly ten picojoules of energy to compute on a single bit. Moving that same bit across a meter of copper at 224 gigabits per second now costs thirty to fifty picojoules. More energy to move the answer than to find it.

DWG: Dielectric Waveguides

Light fixes this. A photon travelling through a strand of glass loses essentially no energy over the distances inside a data cente, there is no resistance, no thermal load, no signal smearing. A modern optical link carries 1.6 terabits per second through a strand of glass thinner than a human hair; the equivalent in copper would be a bundle of wires the size of a fist, generating enough heat to need its own cooling. Optical fiber is also good for kilometers where copper fails at a meter. Light is currently more expensive per bit than copper, and that is the only argument copper has left.

At AI cluster scale, the energy and distance advantages are so large that nothing else matters.

For a million GPUs to behave as one machine, the bits have to move between them at energy budgets only light can deliver. Copper has been improved for sixty years and has now hit its physical ceiling. There is no alternative material. There is no third candidate. The cluster either runs on light, or it does not run.

This is not a networking story. It is a physics story. The wires stopped being wires.

The Frame

Five years ago, a transceiver was a networking product made by a networking company. Today, Nvidia is buying laser fabs.

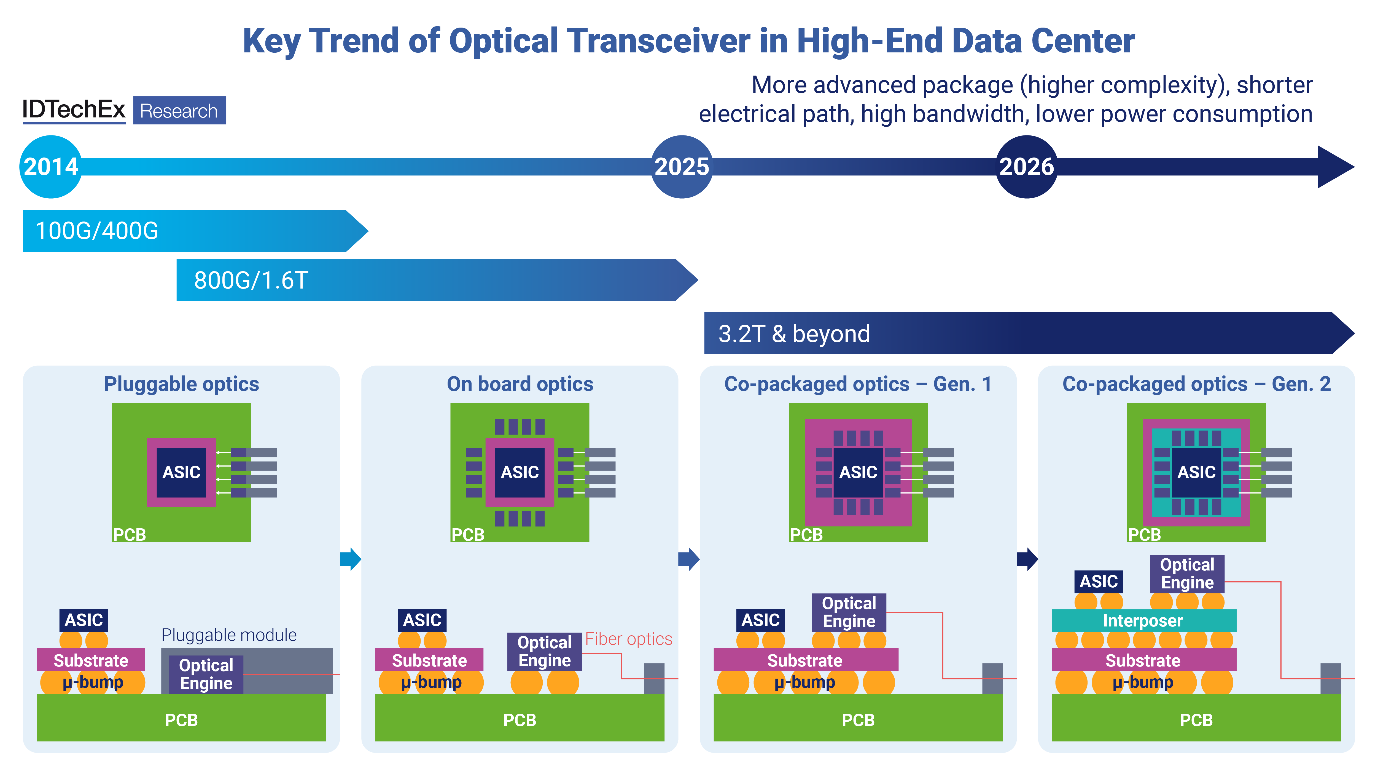

A transceiver is the small finger-sized device that converts electrical signals from a chip into light pulses for the fiber, then converts incoming light back into electricity at the other end. The word is transmitter + receiver — it does both jobs in one box. Today’s data centers contain millions of them, lined up in switch ports.

The arrangement that used to govern this layer was clean. Specialist optical vendors sold lasers to networking primes — Cisco, Arista, Juniper — who packaged them into transceivers and sold them to data centers. The chip company sat at the end of the chain. Today, Nvidia is reaching upstream past all of them, buying the laser makers directly and pulling the optical layer into the package. The networking company in the middle no longer has a layer to occupy.

Optical is becoming part of the GPU.

The same dissolution that made memory part of the GPU is now making light part of the GPU. The boundary between compute and interconnect is dissolving the same way the boundary between logic and memory did.

Optical has actually been winning this argument for thirty years, in three rounds. Across the data center, between buildings or down long halls, optical replaced copper in the 1990s. Between racks, across distances of a few meters, optical took over in the early 2010s, the pluggable transceiver in the switch port is what handles this layer today. Inside a single rack, between chips that sit centimeters apart, copper was good enough until 2024. Blackwell-class GPUs at 1.4MW racks and 224 gigabits per lane have closed that last refuge. The transition the essay is about is the third round, the shortest distance, the highest stakes, and the one Nvidia is paying for itself.

Photonics is what happens when you build the circuits out of light instead of electricity. Silicon photonics is the version that uses the same wafers and the same fabrication processes as the logic chips next to it — which is what makes it possible to bond the two together.

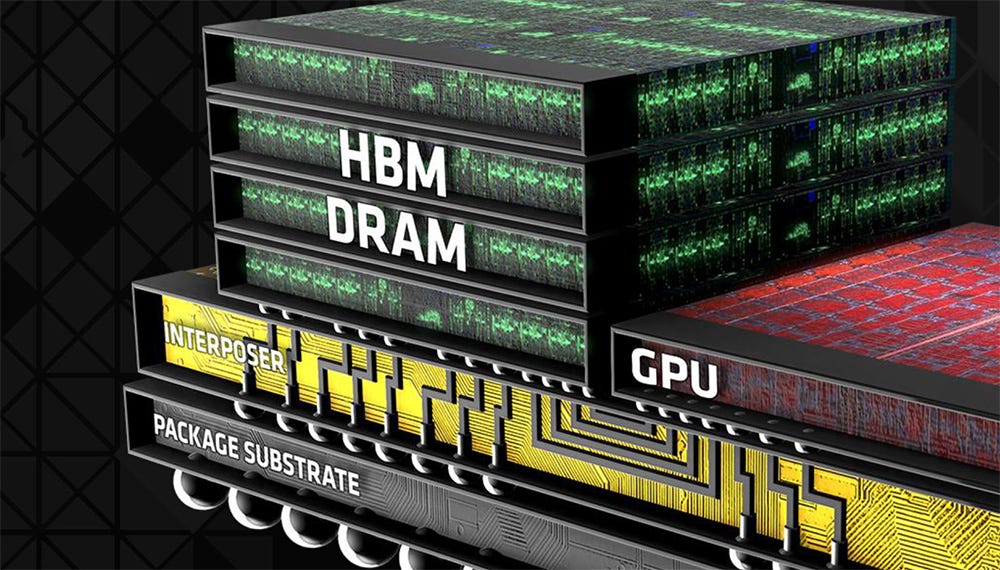

In the second essay in this series, the lesson was that half the cost of a state-of-the-art accelerator is now the memory glued next to the logic. HBM stopped being an external commodity and became a structural part of the chip. The companies that owned the memory layer captured the rerating; the ones that didn’t were left explaining why the GPU was still primarily a chip company’s product.

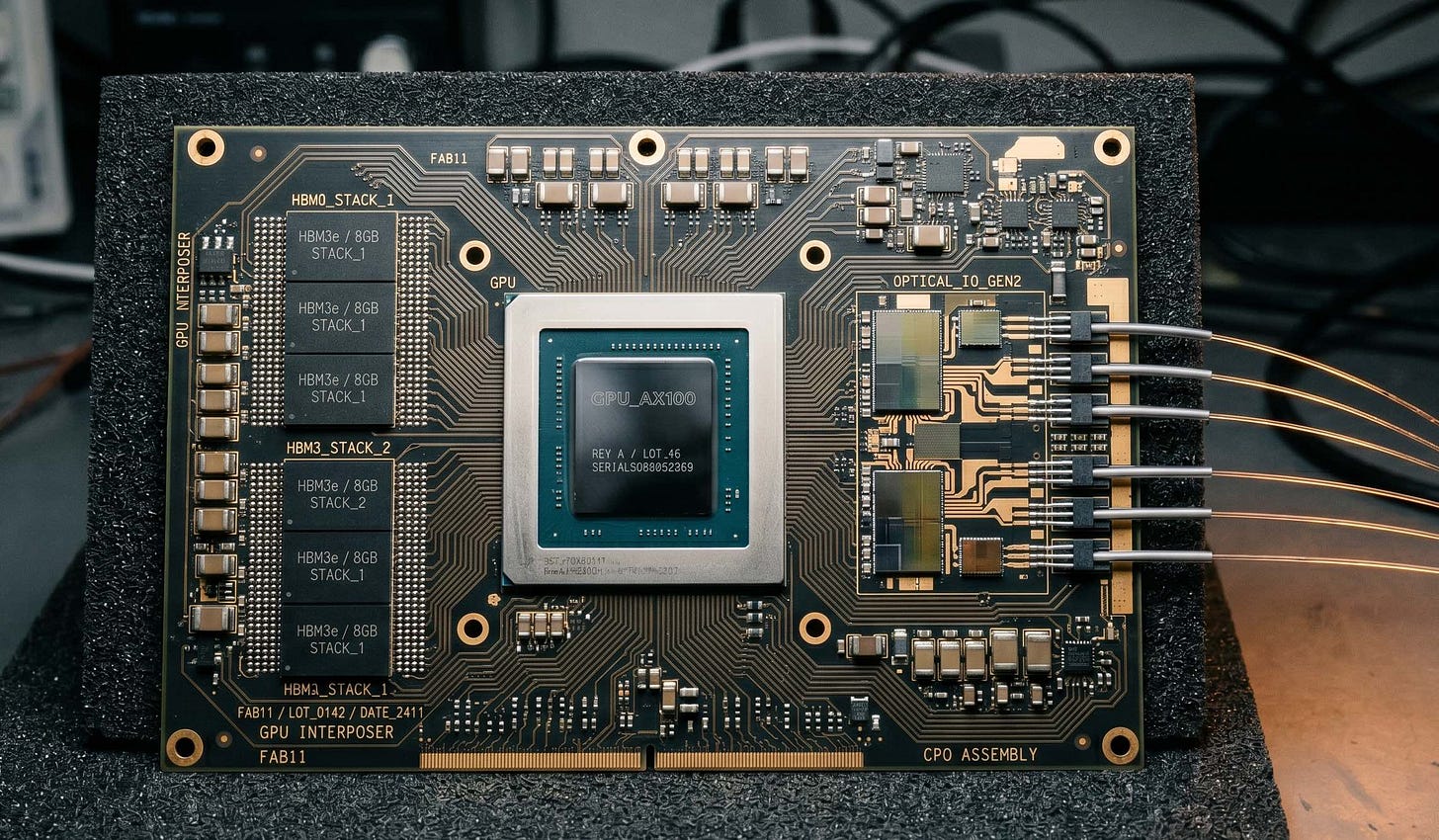

The same thing is happening with light. The transceiver is leaving the network and entering the package. The laser fab is becoming as strategic as the foundry. The destination is co-packaged optics: an architecture where the optical components are bonded directly onto the same substrate as the GPU, eliminating the copper trace between them entirely.

In 2023, networking accounted for roughly ten percent of the cost of a state-of-the-art AI cluster. By the end of 2025, it was fifteen to twenty percent. By 2028, with co-packaged optics scaling, it will be twenty-five to thirty. The global optical interconnect market for AI was around ten billion dollars in 2024. The most credible projections put it at seventy by 2030. A 7x in six years, in a market most generalist investors are not yet tracking.

From Copper to Light

In March 2026, Nvidia put $4 billion into two laser companies most investors had never heard of, $2 billion each into Coherent and Lumentum. The same month, Marvell paid $3.25 billion to acquire a startup called Celestial AI for a technology called Photonic Fabric. These deals are usually reported separately, as supply-chain stories. They are actually one story, and it is a story about NVLink.

NVLink is Nvidia’s proprietary chip-to-chip interconnect, the high-speed link that lets multiple GPUs inside a single server or rack talk to each other as if they were one machine. Without NVLink, a thousand GPUs are a thousand independent computers. With NVLink, they are one computer. It is the technology that holds the AI factory together, and for the entire history of AI training, it has run on copper.

The transition under way, named precisely, is this: NVLink is moving from copper to light. The optical components being bonded onto the GPU substrate are the optical version of NVLink itself. Co-packaged optics is not a peripheral upgrade or a networking refresh. It is the conversion of the most strategic proprietary technology in the AI hardware stack from one physical medium to another.

Lumentum makes the 200-gigabit-per-lane laser that goes into every advanced AI optical module shipping today. There is no alternative supplier at volume. Coherent makes the same lasers, plus the substrate they sit on. There is no third name on the list. Marvell, through Celestial AI, owns the photonic-interconnect technology that lets the new optical NVLink scale beyond a single package. Read together, the three transactions tell us which suppliers gate the conversion. The cheques are a map.

To get a sense of physical scale: a Blackwell-class GPU today is paired with optical transceivers that contain a few dozen lasers, separated from the chip by short copper traces. The Rubin-class accelerators arriving in 2027, built around co-packaged optics, will carry roughly two to four hundred lasers each, bonded directly onto the GPU substrate. Multiply that by the million-GPU clusters now being planned and the AI industry will need several hundred million semiconductor lasers in the next three years. That is more lasers than the entire global telecommunications industry produced in any single year before 2023. Every one of them is grown on an Indium Phosphide wafer that comes from one of three companies. This is the physical reality the cheques are paying to expand.

There is a second NVLink story underneath. In 2025, Nvidia opened the standard, NVLink Fusion, to admit chips designed by other companies into its ecosystem. AMD, Broadcom, Google, Meta, Microsoft, and AWS had been forming a competing consortium called UALink, essentially everyone except Nvidia. NVLink Fusion was the embrace strategy: come into the walled garden and bring your own chips. Marvell’s purchase of Celestial AI is what makes this work — Marvell becomes the bridge between Nvidia’s ecosystem and the custom-ASIC world. The capital deployment and the open standard are the same move, viewed from two angles: hold the moat and widen the moat at the same time.

The Stack and the Substrate

Three layers sit underneath every optical module Nvidia ships. The market has priced one of them. The other two, almost nobody has heard of.

An optical module is the small package that turns electrical signals into pulses of light and back again the laser, the modulator, the detector, and the chip that drives them, all bonded together at the edge of a switch or, increasingly, onto the GPU board itself. A modern AI rack contains thousands of them. Five years ago, Nvidia did not ship them; networking companies did. Today, the supply chain that feeds them runs through Nvidia.

Underneath every one of those modules is a substrate, the wafer of material the chip is grown out of, atom by atom. Silicon for compute logic. Indium Phosphide for the lasers that emit light. A specialised hybrid wafer called Photonics-SOI for the silicon photonics chips that route the light once it’s emitted. Substrates are not components you can swap. They are the material the chip is. Change the substrate and you change the fab, the process, the company, the decade. That is why monopolies at this layer are sharper than anywhere else in the supply chain.

Soitec is a French company most American investors cannot pronounce. It holds a global monopoly on the wafer substrate every silicon photonics chip in the world is built on. There is no second source at volume. Soitec’s photonics revenue today is small, roughly €86 million, growing 25 percent annually, and projected to roughly quadruple by 2028 as co-packaged optics scales. The European Union has not yet noticed it has a strategic asset on its hands.

Beneath Soitec sits Indium Phosphide, 60 percent from Sumitomo in Japan and 35 percent from AXT in China. Global wafer production is around 600,000 a year. By 2027, demand is projected at two million. In February 2025, China imposed export controls on indium compounds.

Downstream of all this, the visible layer has rerated. Innolight, Nvidia’s largest optical module supplier, is up roughly 1,700 percent over twelve months. Eoptolink is up roughly 1,600. Fabrinet, the contract manufacturer most of the industry runs through, has rerated similarly. The market is paying full price for what it can already see. The substrate layer above it, where Soitec sits, has barely moved.

All of which is fragile, of course. A single supplier going offline in Hsinchu would render the whole stack irrelevant overnight. But that risk is the same as the GPU risk, and the market has already decided to live with it.

What the Trilogy Was Actually About

The first essay was about the brain. The second was about memory. This one is about light.

Three different layers of one stack. And the lesson, finally visible from the top, is that the layers stopped being separate around 2024.

In 2020 you could be a memory company or a compute company or a networking company. In 2026 you cannot. The pieces stopped fitting together; they started being the same piece.

This has happened before, and recently. Memory was integrated into the GPU package between 2016 and 2024. Most observers did not notice the dissolution while it was happening; they noticed the rerating after. Light is integrating into the GPU package between 2024 and 2030. The same arc, in real time, on the next layer down.

It is worth being precise about what this does and does not mean. The chips that think, the silicon transistors doing the actual math, will continue to be electrical for the foreseeable future. Light is replacing the moving of information, not the processing of it. What is changing is the boundary of the chip. The chip used to mean a piece of silicon that did math. The chip in 2026 means a system that does math, holds its working memory next to the math, and now talks to its neighbours at the speed of light. Each step the boundary widened.

The optical buildout is the precondition for the largest concentration of compute power in human history. Almost nobody outside the labs is thinking about it that way. The cluster that does not exist yet is being built one layer at a time, mostly in the dark, by people who will not be famous for it.

The trilogy was never really about three layers. It was about one observation, told three ways: the chip is no longer the unit of analysis. The system is. And the people who see the system whole will build what comes next.

Disclaimer

This post is for informational and educational purposes only. It does not constitute investment advice, a recommendation, or an offer to buy or sell any security. I, members of my family, or entities I am associated with may hold positions in the companies or sectors discussed, and those positions may change at any time without notice. Past performance is not indicative of future results. You should consult your own financial, tax, and legal advisors before making any investment decision.

Appendix

Companies Mentioned (Map, Not Recommendations)

Substrates — the upstream chokepoints

Soitec (EPA: SOI) — French, monopoly supplier of Photonics-SOI wafer substrate for silicon photonics

Sumitomo Electric (TYO: 5802) — Japanese, leading supplier of Indium Phosphide substrates for laser chips

AXT Inc. (NASDAQ: AXTI) — American with Chinese manufacturing exposure; the second InP substrate name

Shin-Etsu Chemical (TYO: 4063) — Japanese, broader semiconductor materials with photonics adjacency

Lasers and photonic devices

Coherent (NYSE: COHR) — American, 60-year native optical company; lasers and photonic components; one of the two laser-layer companies receiving Nvidia capital in March 2026

Lumentum (NASDAQ: LITE) — American, near-monopoly on 200G EML lasers; the other March 2026 Nvidia counterparty

Applied Optoelectronics (NASDAQ: AAOI) — American, in-house InP laser fab using proprietary MBE process

IPG Photonics (NASDAQ: IPGP) — vertically integrated fiber laser specialist (industrial, not data center)

Optical engines and PIC platforms — the M&A war layer

Marvell Technology (NASDAQ: MRVL) — American, acquired Celestial AI for Photonic Fabric (~$3.25bn)

Credo Technology (NASDAQ: CRDO) — American, acquired DustPhotonics for silicon photonics IP

Astera Labs (NASDAQ: ALAB) — American, acquired aiXscale for fiber-chip coupling

POET Technologies (NASDAQ: POET) — American/Canadian, optical interposer platform

Ayar Labs (private) — Intel-backed, co-packaged optics specialist; IPO candidate

Lightmatter (private) — Nvidia-backed, photonic computing and interconnect

Foundries and process platforms

TSMC (NYSE: TSM) — silicon photonics platform (COUPE) and advanced packaging

Tower Semiconductor (NASDAQ: TSEM) — leading silicon photonics contract foundry

GlobalFoundries (NASDAQ: GFS) — entering silicon photonics

Quantum Computing Inc. (NASDAQ: QUBT) — first US TFLN-only foundry, in Tempe AZ

Modules and assembly — the volume layer

Innolight Technology (SHE: 300308) — Chinese, Nvidia’s largest optical module supplier

Eoptolink Technology (SHE: 300502) — Chinese, ~20% share of Nvidia 800G LPO

Fabrinet (NYSE: FN) — Thai contract manufacturer for high-end optical components

Coherent and Lumentum (above) — also competitors in the module layer

Cisco (NASDAQ: CSCO) — vertically integrated, owns Acacia (DSP) and Luxtera (silicon photonics)

Nokia (NYSE: NOK) — vertically integrated through Infinera acquisition; “the only vertically integrated Western optical vendor”

Fiber, cabling, and physical infrastructure

Corning (NYSE: GLW) — dominant AI-optimized fiber supplier

Fujikura (TYO: 5803) — Japanese fiber and fusion splicer specialist; +170% YTD on AI demand

Test and measurement

Anritsu Corporation (TYO: 6754) — Japanese, owns the only complete traceable 145 GHz electro-optical measurement system; reference for 3.2T systems

Keysight Technologies (NYSE: KEYS) — American T&M giant with photonics exposure

Connectivity ICs (electro-optical bridge layer)

Marvell (above) — DSP leadership through 2021 Inphi acquisition

MACOM Technology (NASDAQ: MTSI) — analog ICs with in-house InP laser fab

Semtech (NASDAQ: SMTC) — analog ICs; entered laser layer through 2026 HieFo acquisition

Buyers

Nvidia (NASDAQ: NVDA), AMD (NASDAQ: AMD) — the largest single customers

Hyperscalers (Amazon, Microsoft, Google, Meta) — direct buyers via custom silicon programs

Key Terms

Optical interconnect — moving data between chips, racks, or data centers using light rather than electrical signals

Silicon photonics (SiPh) — building photonic devices on silicon wafers using semiconductor manufacturing techniques

CPO (Co-Packaged Optics) — bonding optical engines directly onto the same substrate as the processor; eliminates the pluggable transceiver step

LPO (Linear Pluggable Optics) — pluggable optical module with the digital signal processor stripped out; 30–50% power savings versus traditional pluggables

NPO (Near-Packaged Optics) — optical engine placed on the switch board but still in a separate package from the ASIC; the bridge step between LPO and CPO

PIC (Photonic Integrated Circuit) — multiple photonic functions integrated on a single chip

InP (Indium Phosphide) — compound semiconductor substrate used to manufacture laser chips for telecom and data center applications

Photonics-SOI — specialized silicon-on-insulator wafer substrate used for silicon photonics chips; Soitec holds a global monopoly at volume

EML (Electro-absorption Modulated Laser) — laser type used in 200G-per-lane optical modules; the workhorse of the 2026 cycle

TFLN (Thin Film Lithium Niobate) — next-generation modulator material with higher efficiency than silicon; early-stage commercialization

Optical engine — the integrated unit that converts electrical signals to optical signals and back, typically including laser, modulator, and photodetector

Transceiver — the pluggable module that handles optical-to-electrical conversion at switch ports today

Scale-up vs scale-out — scale-up is GPU-to-GPU communication inside a rack (where CPO matters most); scale-out is between racks across the data center

NVLink Fusion — Nvidia’s proprietary scale-up interconnect, recently extended to admit non-Nvidia chips

UALink — the open consortium scale-up interconnect standard backed by AMD, Broadcom, Google, Meta, Microsoft, and AWS — essentially everyone except Nvidia

224G per lane — the per-lane signaling rate at which copper traces lose signal integrity beyond approximately one meter; the technical reason optical is becoming structural

Further Reading

These are the sources I returned to most while writing this. Where I have linked to a publication rather than a single piece, it is because the writer’s whole body of work matters more than any one essay.

The big-picture frame

Leopold Aschenbrenner, Situational Awareness: The Decade Ahead — situational-awareness.ai. The canonical argument for why the AI compute buildout is the most consequential industrial project of the century. The “Racing to the Trillion-Dollar Cluster” section sits underneath this essay’s opening.

Chris Miller, Chip War (2022) — the definitive book on the geopolitics of semiconductors. The Taiwan chapters are essential context for the bear case section.

Ed Conway, Material World (2023) — broader frame on the physical inputs to the modern economy. The chapters on rare materials are a useful primer for the substrate chokepoint argument.

The optical value chain — specialist research

Damnang’s Substack — damnang.substack.com. The most granular public-domain mapping of the optical value chain. Damnang’s Optical Investment Map v1.0 (April 2026) breaks the space into seven layers and 50+ tickers; The War of Light: A Laser Shortage covers the Nvidia-Coherent-Lumentum cheques in detail. If this essay made you want to go deeper, start there.

SemiAnalysis (Dylan Patel and team) — semianalysis.com. Their work on Nvidia’s optical roadmap, NVLink Fusion versus UALink, and the co-packaged optics transition is the most important ongoing reporting in this space.

LightCounting — lightcounting.com. The standard industry source for optical module market share and forecast data.

Ongoing primary research

Stratechery (Ben Thompson) — stratechery.com. Strategic frame on Nvidia and the platform dynamics of the AI compute layer.

Fabricated Knowledge (Doug O’Laughlin) — fabricatedknowledge.com. Long-form semiconductor analysis on Substack with regular optical coverage.

Digits to Dollars (Jay Goldberg) — digitstodollars.com. Industry-insider commentary on networking silicon.

The Next Platform — nextplatform.com. The deepest publicly available reporting on hyperscaler infrastructure architecture.

Industry conferences and standards bodies

OFC (Optical Fiber Communication Conference) — the annual event where the next generation of optical technology is announced. The 2026 program (just concluded) was the moment co-packaged optics moved from concept to roadmap.

OIF (Optical Internetworking Forum) — oiforum.com. Standards body for high-speed optical interconnect; their CEI specifications drive the 224G and 448G transitions.

Open Compute Project (OCP) — opencompute.org. Hyperscaler-led infrastructure standards; their CPO and OCS working groups are where the volume-deployment specifications get set.

Primary source documents

Nvidia GTC keynotes (Jensen Huang) — for the customer side of the optical demand picture and the strategic logic of the laser-fab investments.

Coherent, Lumentum, Marvell quarterly earnings calls — the supply-side picture, particularly post the March 2026 Nvidia commitments.

Nvidia’s 8-K filings around the March 2026 investments in Coherent and Lumentum — the only public document with the full structure of the underwriting commitments.

TSMC quarterly earnings calls — for advanced packaging (CoWoS) capacity dynamics that gate the optical buildout.

Policy and national security

CSIS (Center for Strategic and International Studies) — csis.org. Their semiconductor supply chain reports increasingly cover optical and the substrate chokepoint argument.

US Commerce Department CHIPS Act announcements — for what is and isn’t being funded on the optical layer.

US Bureau of Industry and Security (BIS) export control filings — particularly the February 2025 indium compound restrictions that reshaped the InP substrate picture.

European Chips Act updates — relevant because of Soitec’s strategic position; Brussels has not yet woken up to what it has.

Prior essays in this series:

The Other AI Trade — why the boring CPU chip at the center of every server is back at the center of the trade.

Half of Nvidia — why the most important number in the AI trade is no longer how many GPUs Nvidia ships, but how much memory is glued to each one.

This is great work. 🙏

Great article. Thanks for the summary I finally can understand about optical technologies.